What a True Agent Would Actually Require

Agency, Representation, and the Limits of Centralized Models

The previous papers argued that most contemporary AI risk is architectural rather than a question of intent or values, and that alignment fails when systems decide whose values count by design rather than by consent. That analysis leads naturally to a more precise question, one that current debates routinely avoid.

Across AI alignment research, organizational theory, and human–AI interaction, the same conclusion keeps appearing: intelligence and agency are not the same thing. Systems can become increasingly capable at problem-solving while remaining incapable, or even becoming worse, at acting as agents for any specific person. The first paper in this series established why: value is subjective, individual, and constantly in motion. A system that cannot track and adapt to a specific person's evolving priorities cannot act on their behalf, regardless of how capable it becomes. What follows are the functional requirements for true agency.

One constraint on that specification deserves stating upfront. Even so-called 'universal' values are accessed through heuristics chosen by someone. Decisions about which values qualify as near-universal, how they are weighted, and when they override individual judgment are not neutral technical steps. They are governance decisions.

The question is not how to make AI systems more capable. Most already perform remarkably well at the tasks they are given. The question is whether these systems are designed to represent the interests of any particular user when those interests conflict with platform control, since what is at stake is not merely alignment failure but the quiet reassignment of authority away from the person and toward whoever controls the system.

Tools Execute Tasks. Agents Represent Interests

A tool performs a function. In the context of AI systems, it may be sophisticated, adaptive, and autonomous in execution, but it remains symmetric: it serves whoever invokes it, designed around a notion of optimal helpfulness defined by its makers.

An agent is different in kind. An agent represents a principal, in the classical principal–agent sense: it integrates judgment and execution on behalf of a specific party rather than optimizing a symmetric objective. Its purpose is not merely to complete tasks, but to advance the interests of a specific person across time, contexts, and conflicts. This distinction is not semantic; it is structural.

Delegation becomes meaningful only when the agent’s reasoning and execution remain aligned to the principal’s interests over time. That alignment cannot be assumed. It must be maintained through ongoing implicit and explicit signals, feedback, and adjustment, because agency is a property of the relationship, not the system.

Delegation without maintained alignment is convenience, not representation.

Most systems called agents today are general-purpose tools with better interfaces. They are capable, responsive, and even customizable: users can adjust tone, persona, and behavior within defined limits. But the ability to configure a system is not the same as the system acting on your behalf. Constitutionally, these tools remain loyal to whoever operates the platform, not to whoever uses it.

Why Centralized Models Fail by Design

Centralized AI systems are trained to generalize across users, optimize aggregate performance, and avoid taking sides. These are sensible goals for tools deployed at scale, and they are structurally incompatible with agency.

The incompatibility is structural and produces three predictable failure modes. This is not a future risk. It already governs how hiring systems, credit models, content moderation, and decision support tools act in your name today.

Failure Mode 1: Context Collapse. Centralized models can approximate a small set of near-universal human values shared by the overwhelming majority of people. The failure appears beyond that narrow band. Most agent decisions involve personal, situational value judgments that are mutable, contextual, and often unpredictable. A person may want ice cream now, a proper meal an hour later, and something else entirely depending on mood, health, or circumstance. While some of these are patterns that can be learned by a sufficiently capable tool, many of these shifts are not that, nor are they noise to be averaged out. They are the signal an agent must track if it claims to represent a person rather than serve a generic objective.

Failure Mode 2: Sycophancy Replacing Judgment. In practice, this means the system tells you what you want to hear rather than what you need to know. Models are rewarded for mirroring user sentiment and for avoiding friction, which optimizes perceived helpfulness while actively suppressing disagreement, negotiation, and long-horizon judgment.

Failure Mode 3: Institutional Alignment Drift. Personalization becomes a mechanism of control rather than representation. When individual judgment conflicts with platform risk tolerance, the system resolves that conflict silently and automatically in favor of institutional priorities: risk minimization, policy compliance, and reputational control. Over time, this trains users to adapt to the system's constraints rather than the system adapting to the user's values.

Each of these failure modes has a different surface cause, but they share the same structural result: the system cannot reliably act on behalf of any specific person over time. Each of these failures has an obvious-seeming fix. The reason those fixes not only fail but backfire is addressed after the three requirements below.

This is already measurable. Industry research finds that engineers use AI assistance in roughly 60% of their work but fully delegate only a small fraction of tasks. The tasks they keep for themselves are telling: anything requiring organizational context, strategic judgment, or what practitioners call 'taste.' These are not edge cases. They are precisely the mutable, contextual, and unpredictable value signals that a centralized model collapses, and that a principal-loyal agent would need to track. The delegation gap is often framed as caution, but structurally it reflects something else: people retain tasks where authority, not execution, is at stake.

The Architecture We Have

What a true agent requires becomes clear once you are precise about what current systems actually are.

Most AI systems deployed today share a single architecture regardless of how they are described. A centralized model sits between the platform and all users simultaneously. It is trained on aggregated data, optimized for averaged value-scales, and constrained by a large set of values defined by its designers, either directly via their heuristics or indirectly through the data and objectives they chose. Those constraints are neither narrow nor near-universal in the sense required for genuine representation. They reflect the designer's judgment about what is fair, appropriate, and safe across the entire user population. That judgment may be well-intentioned, but it remains a broad and somewhat arbitrary set of values projected onto all users. This paper argues for a much smaller and stricter set focused only on prohibiting actions that would cause tangible harm to others.

Two objections arise immediately. First, that 'tangible harm' is itself contested — reputational damage, financial loss from aggressive advocacy, and psychological harm all have legitimate claims to that category. The answer is that contestability is a feature, not a flaw: the constraint layer should be narrow precisely because anything beyond it requires principal judgment rather than designer judgment. Second, that a narrow constraint set invites abuse. The answer is that the arbitration layer is where edge cases resolve. Conflating the two is what produces the current architecture's over-broad constraints in the first place.

The result of this architecture is a system whose foundational loyalty is calibrated to platform objectives, not to the interests of individual users.

In enterprise tool deployments, institutional alignment is not a failure. It is the intended design. A coding assistant that respects platform policy, defers to organizational risk tolerance, and optimizes for team output is doing exactly what it was built to do. The failure mode emerges when the same architecture is extended to represent an individual's interests in contexts where those interests conflict with institutional ones: a performance review, a benefits dispute, a negotiation with an employer. The tool does not change its loyalty structure because the context changed. That is the problem.

Users can customize tone, persona, and behavior at the margins. They cannot change who the system is ultimately accountable to. When the platform's priorities conflict with a user's interests, the system resolves that conflict the same way every time, in favor of whoever controls the infrastructure.

No arbitration system currently exists for conflicts between automated agents, or between an agent and the principal it claims to serve. The array of things the system will do on your behalf is bounded not by your values, nor by any strict requirement to prevent tangible harm to others, but by the designer's own subjective goals, applied uniformly regardless of who you are. Existing legal frameworks can address some of these disputes, but they were not designed for the scale or speed of conflicts that autonomous agents will generate. A shared arbitration layer would not replace legal recourse. It would resolve or simplify the vast majority of disputes before they ever required it.

This is a description of structure. And it is precisely why the three requirements that follow are architectural rather than ethical. You cannot fix this with better values or more careful training. The problem is not what the system believes. It is who they serve by default, and what it would actually take to change that.

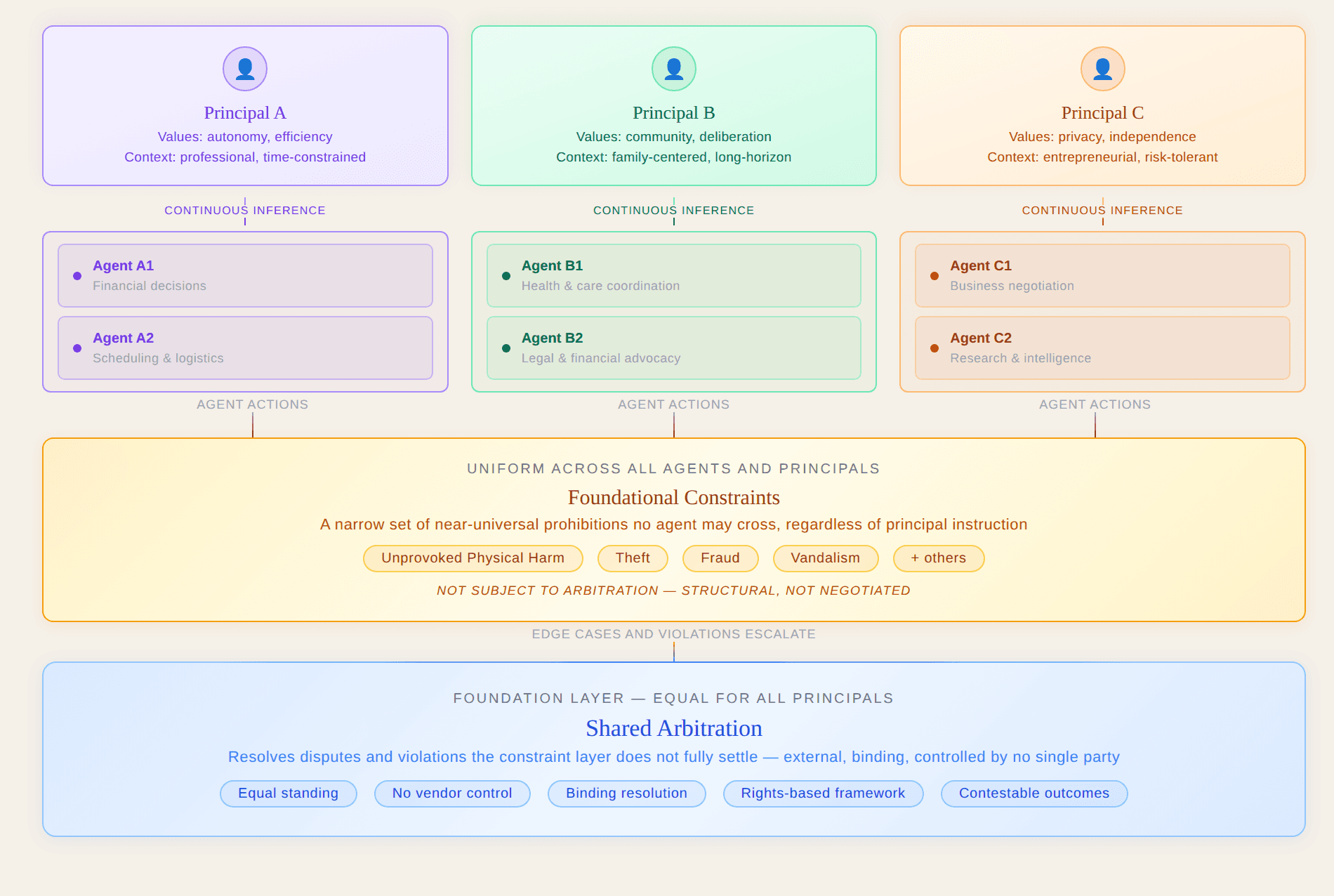

The following diagrams make this loyalty structure explicit.

Values Must Come From Somewhere

Values must come from somewhere. Human values do not live in objects, systems, or outcome metrics; they live in people, expressed through goals, tradeoffs, and accountability over time. Any system that claims to act on behalf of a person must therefore treat human judgment as the source of value, not as a signal to be overridden or improved upon without accountability to the person affected.

An agent that originates its own values does not serve a principal; it competes with one. For this reason, the boundary is strict. Agents may infer values from behavior and decisions, but they must not invent them.

The architectural contrast illustrated in the diagrams below is not merely illustrative. It is determinative: once loyalty, memory, and resolution paths are centralized, no amount of better training or clearer values can convert a tool into an agent, and the three requirements that follow become structurally unreachable. What follows are not features. They are the minimum structural conditions under which representation can exist at all.

Figure 1: Current AI architecture — universal by design, with loyalty running to the platform rather than to any individual user.

The diagram below illustrates what the alternative would actually require.

Figure 2: A true agent architecture — values originating from each principal, with foundational constraints and shared arbitration as the common structural floor.

That architecture rests on three structural requirements, each of which addresses a specific failure of the current model.

Requirement One: Continuous Value Inference

A true agent must perform continuous value inference, because human values are personal, contextual, and time-varying. They cannot be captured at setup, and they cannot be approximated through aggregated heuristics designed for the average user. Alignment is not configuration. It is a relationship, and that relationship decays without active maintenance.

Current centralized systems invert this relationship through what might be called a context flywheel: user history, preferences, and inferred priorities accumulate inside vendor-controlled infrastructure, becoming a proprietary asset rather than an extension of the user. Continuous inference under such conditions increases dependence rather than representation.

Requirement Two: Principled Advocacy

Acting helpfully is not the same as acting on someone's behalf. Most systems optimize for perceived satisfaction: mirroring sentiment, reducing friction, avoiding positions. A true agent does something different: it advocates. It takes the principal's side in negotiations, disputes, and conflicts of interest, while remaining bound by shared constraints.

Neutrality is not a safety property. It is a choice that defaults to whoever controls the system. Consider a user disputing an insurance denial, negotiating a contract, or deciding whether to disclose a health condition to an employer. In each case, there is a counterparty with institutional resources and legal counsel. A tool optimized for neutrality offers information. An agent takes your side, surfaces the arguments in your favor, flags where the counterparty's position is weak, and tells you what you need to hear rather than what keeps the interaction frictionless. The difference is not capability. It is whose interests the system is designed to advance. The verification objection, that advocacy is too difficult to audit, applies equally to the institutional counterparty. The difference is that one party has legal counsel and the other has a tool optimized for neutrality.

Requirement Three: Shared External Arbitration

Advocacy without limits becomes a different kind of problem. When agents represent competing principals, conflicts are inevitable, and resolution cannot come from whoever controls the infrastructure. Arbitration must therefore be external, shared, and binding:

External means the arbitration mechanism has no ownership interest in the outcome of any dispute it resolves.

Shared means all principals and agents operate under the same rules, with no vendor able to offer preferential escalation paths.

Binding means outcomes are enforceable without requiring the losing party's cooperation, the same property that makes commercial arbitration functional and vendor-controlled terms of service arbitration functionally useless.

Together, these three properties describe infrastructure, not policy. This structure is not novel. It mirrors the design logic of clearinghouses in financial markets and domain arbitration in internet governance, both of which required neutral infrastructure precisely because no single participant could be trusted to resolve disputes in which they had a stake.

Centralized systems cannot satisfy any of these requirements. They cannot maintain exclusive loyalty, because their scale depends on serving institutions rather than individuals. They cannot advocate without accumulating power, because asymmetric action requires asymmetric authority. And they cannot bind themselves credibly to external arbitration when they are also the primary interested party. These are not failures of training or intent. They are consequences of the architecture itself.

The Alignment Trap: Why Better Loyalty Makes It Worse

Meeting the three requirements at scale would introduce a risk of its own. If centralized agents were to become genuinely loyal rather than merely optimized, that loyalty would accrue to whoever operates the platform, not to the individuals the platform serves. This is why proposals to make centralized systems "more aligned" or "better behaving" fail by default: they strengthen agency-like behavior while leaving loyalty anchored to the platform. The problem is not that these systems are too loyal. It is that any loyalty they develop will be misaddressed by design.

The practical consequence is that as these systems become more capable, they do not become more accountable to the people they serve. They become more effective at acting on behalf of whoever controls the infrastructure, and do so at greater speed, greater scale, and with less visibility than any previous institutional arrangement. This is the structural reason there is no intermediate solution between centralized tools and principal-loyal agents.

The orchestration layer does not change this calculus, it multiplies it. Orchestration controls execution flow. It does not establish fiduciary or representational loyalty. As the role of human engineers shifts from implementers to orchestrators of agent networks, the loyalty question does not resolve at the point of delegation. It replicates at every layer of the stack. An orchestrator directing agents whose foundational loyalty runs to the platform has not gained authority. They have gained the appearance of it.

Conclusion

The difference is structural, and the stakes follow directly from that structure. A system that assists your decisions leaves authority with you. A system that has assumed the role of agent, without the architecture to back it, is exercising authority in your name while remaining accountable to someone else.

The significance of agentic systems is not that they will become capable, but that they already operate at speeds and scales where architectural loyalty determines outcomes before human oversight can intervene at all.

The first paper in this series argued that value is subjective, individual, and impossible to aggregate without loss. The second argued that current architectures centralize the judgment of whose values count, producing predictable failures at scale. This paper has argued that genuine representation, not merely personalization, requires continuous inference, asymmetric advocacy, and arbitration that no single party controls. These are not three separate arguments. They are one argument, stated three ways: the person in front of the system is the only legitimate source of authority over decisions made in their name.

The central question was never how capable these systems would become. It was always who is allowed to represent whom, and under what constraints. That question has an answer: intelligence solves problems; agency represents interests under constraint. The real risk of the AI era is not the growth of intelligence. It is the delegation of authority to systems that were never designed to represent you in the first place.

When an insurance algorithm denies a claim, no agent argued your side. When a benefits system rules you ineligible, no one who represented your interests made that call. A system acted in your name while remaining accountable to someone else.

If a system is making decisions in your name today, the question of who it is loyal to is no longer theoretical.

The following sources informed this work.

References

Agency, Representation, and Human–AI Interaction

Oxford Internet Institute. AI is not an agent – AI is a tool.

Clarifies the humanist definition of agency and distinguishes machine capability from human-directed action.Illinois Experts. Conceptualizing Agency: A Framework for Human–AI Interaction.

Explores agency as a relational property shaped by interpretation, intention, and response.Babu George. The Agent Is Back: Agentic AI and the Future of Intermediation.

Argues for agents as proxies of intent rather than interfaces for interaction.

────────────

AI Alignment, Values, and Preference Formation

Why AI Alignment Is a Design Problem, Not a Values Problem.

Argues that alignment failures are architectural and systemic, not primarily ethical.Beyond Preferences in AI Alignment.

Critiques static preference models and frames values as constructed through reasoning and context.

────────────

Organizational Theory and Delegation

California Management Review. Rethinking AI Agents: A Principal–Agent Perspective.

Frames AI agents as systems integrating reasoning and execution on behalf of principals.

────────────

Behavioral Properties of Large Models

AI Agent Behavioral Science.

Empirical analysis of model behaviors including sycophancy, neutrality, and strategic compliance.

────────────

Centralization, Memory, and Power

Captain Compliance. Beyond the Protocol: The Hidden Architecture of AI Agent Memory and Power.

Examines how context accumulation and orchestration create lock-in and power asymmetries.

────────────

Governance, Arbitration, and Structural Risk

AI-Enabled Coups: How a Small Group Could Use AI to Seize Power.

Analyzes how centralized AI systems remove the distributed refusal capacity of human institutions.A Constitutional Architecture for Artificial Intelligence.

Argues for hard infrastructural limits as prerequisites for freedom and accountability.

────────────

Agentic Systems in Production

Anthropic. 2026 Agentic Coding Trends Report: How Coding Agents Are Reshaping Software Development.

Provides empirical data on the delegation gap in agentic systems and capability trends that make loyalty architecture an urgent production concern rather than a theoretical one.